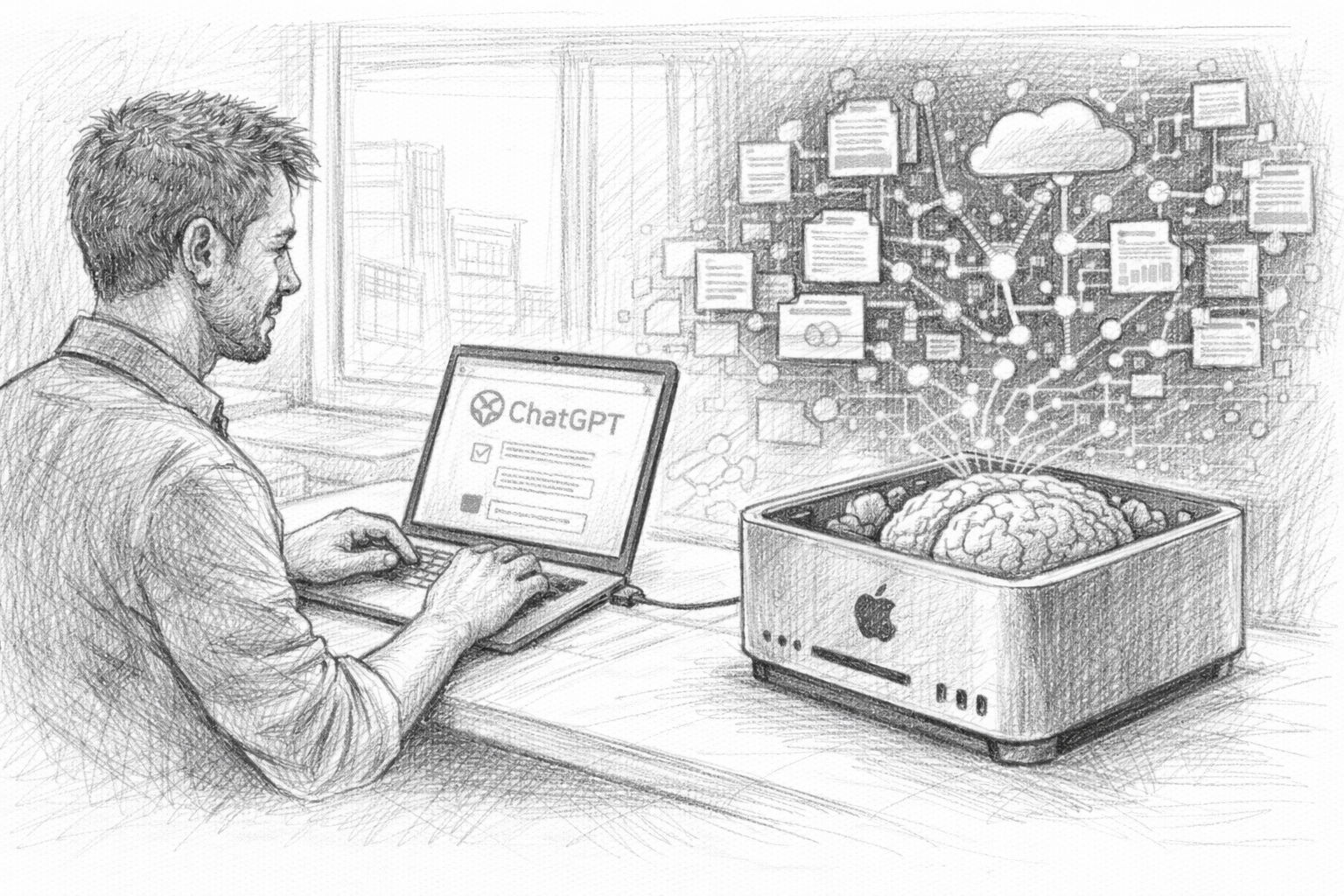

In the first part of this article series, we saw that the ChatGPT data export is much more than just a technical function. Your exported data contains a collection of thoughts, ideas, analyses and conversations that have accumulated over a long period of time. But as long as this data is only stored as an archive on your hard disk, it remains just that: an archive. The crucial step is to make this information usable again. This is exactly where the development of a personal knowledge AI begins.

The idea is actually surprisingly simple: an AI should not only work with general knowledge, but also be able to access your own data. It should search through previous conversations, find suitable content and incorporate this into new answers. This turns an ordinary AI into a kind of digital memory. This is the second part of the article series, which now looks at the practical aspects.