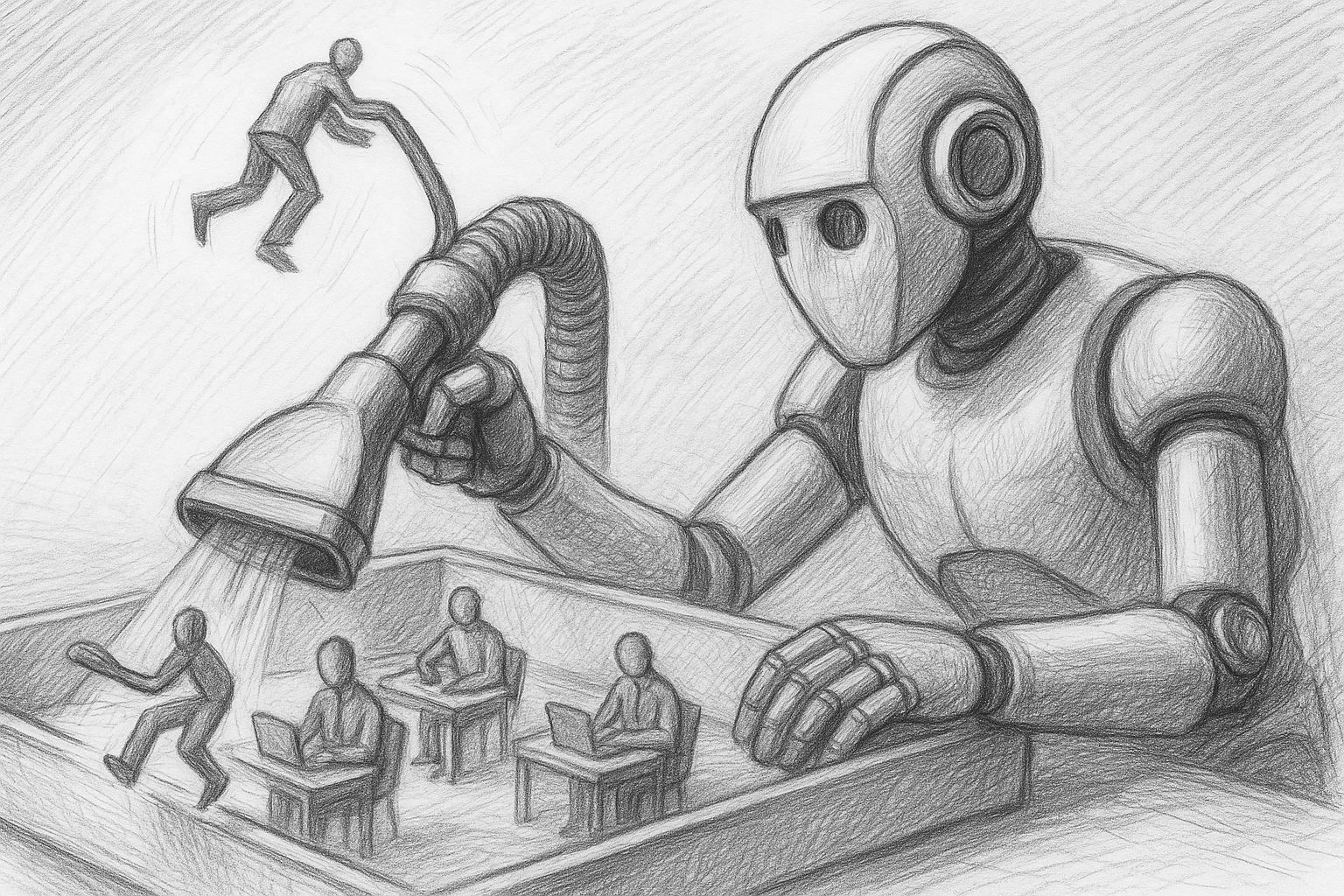

Anyone working with AI today is almost automatically pushed into the cloud: OpenAI, Microsoft, Google, any web UIs, tokens, limits, terms and conditions. This seems modern - but is essentially a return to dependency: others determine which models you can use, how often, with which filters and at what cost. I'm deliberately going the other way: I'm currently building my own little AI studio at home. With my own hardware, my own models and my own workflows.

My goal is clear: local text AI, local image AI, learning my own models (LoRA, fine-tuning) and all of this in such a way that I, as a freelancer and later also an SME customer, am not dependent on the daily whims of some cloud provider. You could say it's a return to an old attitude that used to be quite normal: „You do important things yourself“. Only this time, it's not about your own workbench, but about computing power and data sovereignty.