In an increasingly digitalized world, we spend a lot of time online: Chatting, shopping, working, informing ourselves. At the same time, the rules on how content is shared, moderated or controlled are changing. The Digital Services Act (DSA), the European Media Freedom Act (EMFA), the planned Regulation to Prevent and Combat Child Sexual Abuse (CSAR, often referred to as „chat control“) and the AI Act are key pieces of legislation proposed by the European Union (EU) to regulate the digital environment.

These regulations may seem far away at first glance - but they have an impact on you as a private individual as well as on small and medium-sized companies. This article will guide you step by step: from the question „What is planned here?“ to the background and timelines to the change of perspective: What does this mean for you in everyday life?

In the first part, we take a look at what is actually planned, which major legislative projects are currently being discussed or have already been passed and the reasons for their introduction.

Latest news on planned EU censorship laws

14.04.2026: A current article on it-daily shows that the EU AI Act is increasingly forcing companies to systematically structure their AI activities, which have often been disorganized to date. The regulation came into force in 2024 and is being implemented gradually. The first concrete obligations have been in force since February 2025, such as bans on certain AI applications and the obligation to train employees in the use of AI. In the coming years - in particular by August 2026 - stricter requirements for so-called high-risk systems will follow, which will entail clear responsibilities for providers and users. Overall, it is clear that AI can no longer be used in isolation or experimentally, but must be organizationally embedded, documented and controlled. Companies are therefore faced with the task of redefining processes, responsibilities and competencies relating to AI in order to meet regulatory requirements and operate their systems in a secure and traceable manner.

The EU AI Act and its consequences for companies | Rainer Hoffmann from EnBW | StackFuel

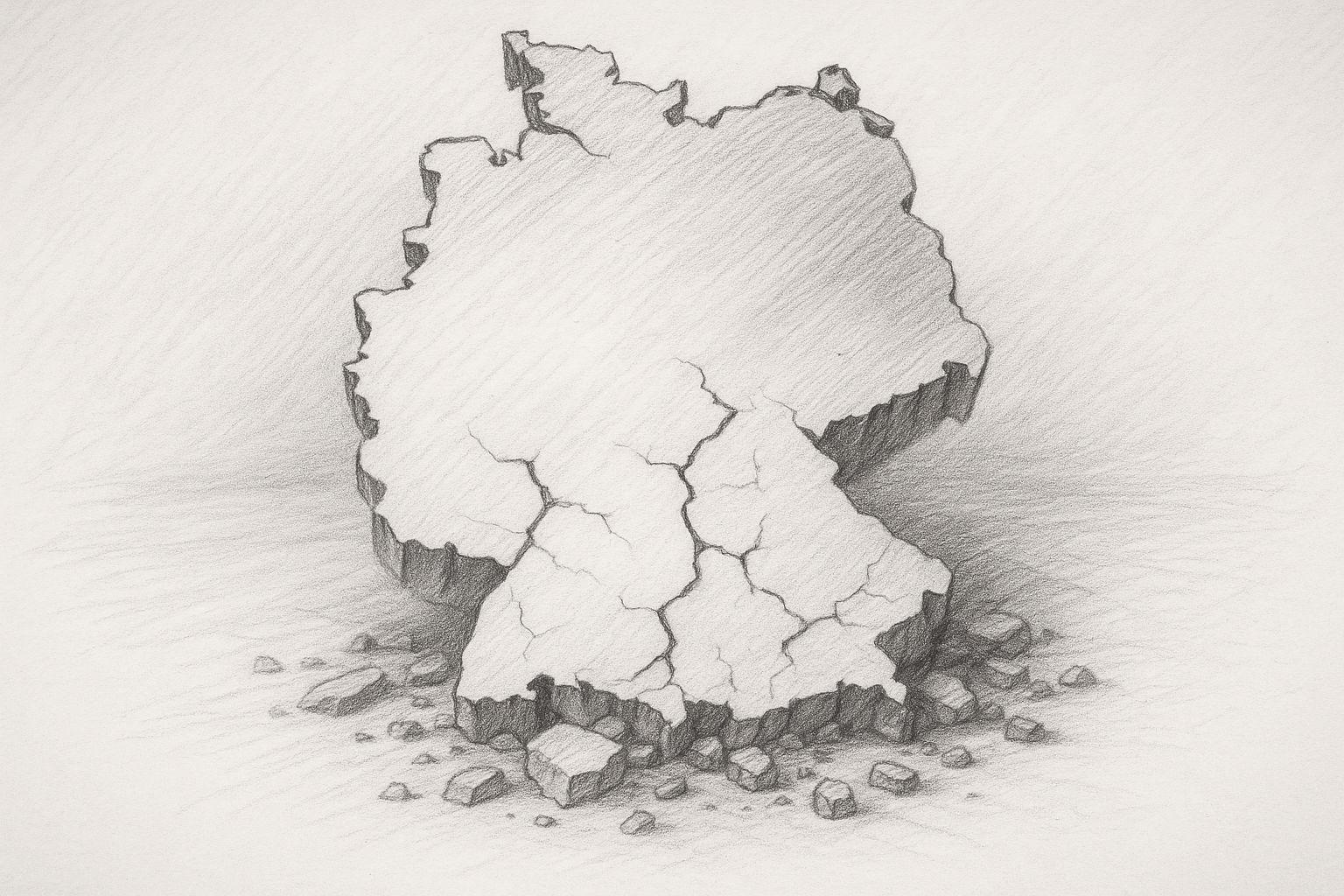

27.03.2026The debate about EU chat control remains highly controversial and is increasingly becoming a political hot potato. As current reports show, the project continues to be caught between child protection and fundamental rights. While supporters emphasize that the scanning of private communications is necessary to effectively combat online abuse, critics warn against the introduction of mass surveillance without cause.

The fact that such measures could potentially encroach deeply on privacy and even affect encrypted communication is viewed particularly critically. At the same time, the political processes in the EU show that, despite repeated setbacks, the issue is not off the table and is still being pushed forward - sometimes behind closed doors. The discussion illustrates how difficult the trade-off between security and freedom has become. The dispute over chat control is therefore exemplary of a fundamental question facing modern societies: How far should state access to digital communication be allowed to go?

27.11.2025 - The EU member states have according to Tagesschau agreed after lengthy discussions not to introduce mandatory chat controls. For the time being, services such as WhatsApp or Signal do not have to automatically scan their messages for depictions of sexualized violence against children. The original plan failed due to strong opposition from Germany, among others. Instead, the EU is now relying on voluntary measures from providers. A previously temporary exception, which allows voluntary scans despite data protection rules, is to be made permanent. After three years, the EU Commission wants to review whether an obligation would be necessary after all. According to the draft, the platforms should nevertheless actively reduce risks for children, for example through reliable age verification. A new EU center against child abuse is also planned, which will assist national authorities. Before the regulations come into force, the EU Council of Ministers and the European Parliament still have to agree on a joint version of the law.

A short time later, however, the Berliner Zeitung published a critical report which questions the Tagesschau's wording and the „voluntary“ nature of chat control. Patrick Breyer, a digital freedom activist, criticizes the voluntary approach in the report and warns the public not to be fooled by the term „voluntary“.

Experts and data protectionists also warn, that this voluntary nature is merely a back door and that fundamental rights could continue to be jeopardized, for example through client-side scanning or the establishment of an EU center for combating child abuse. Critics emphasize that this is by no means the end of the debate and that further political discussions will follow.

24.11.2025 - A Article in the Berliner Zeitung reports that the Regulation to Prevent and Combat Child Sexual Abuse (CSAR) initiative - often referred to as „chat control“ - is to be waved through in the European Union without public debate, according to insiders. Although the explicit obligation to scan chats has been officially removed, the new draft contains a loophole: providers should continue to implement „appropriate risk mitigation measures“, which could effectively mean automated scanning. Critics warn that this could undermine end-to-end encryption and make private communications automatically searchable in future.

What the EU is currently planning: a simple overview

The EU has recognized this in recent years: The internet is changing rapidly. Platforms with millions or billions of users, artificial intelligence, new forms of communication - many legal frameworks date back to previous decades. The EU sees a lot of catching up to do here: it wants to make the digital space safer, protect fundamental rights and create fair conditions - both for users and for companies. For example, the DSA states:

„The aim is to create a safer digital space in which the fundamental rights of all users of digital services are protected.“

Because several legislative projects have been drawn up in parallel, the impression is created that „many things are now being regulated at the same time“ - and this creates uncertainty, especially for people who do not deal with digital law on a daily basis.

The major goals according to the EU

The most important objectives of these legislative packages can be summarized as follows:

- Protection against misuseFor example, the EU wants to use the CSAR design ensure that depictions of child sexual abuse (CSAM) are recognized and deleted more quickly online.

- Protection against disinformation, election interference and social risks: At the DSA expressly states that it is intended to limit the spread of illegal content and other risks, among other things.

- Media pluralism, transparency and fair competitionWith the EMFA, the EU is striving for stronger regulation of the media landscape in order to limit influence and concentration.

- Rules for artificial intelligence and labeling requirementsThe AI Act is intended to ensure that AI systems and their content are transparent in future - for example in the case of deepfakes or automated decisions.

- Fair conditions for companies: In addition to protecting users, the EU also wants clarity and equal Rules of the game for providers (e.g. platforms). One objective of the DSA is to create a „uniform set of rules and competitive conditions for companies“.

Where do we stand today?

The current situation can be summarized as follows:

- With the DSA: The set of rules has already been adopted, Providers with a very large number of users („Very Large Online Platforms“) have been subject to particularly strict rules since 2023/24.

- With CSAR („Chatcontrol“)The proposal was presented by the EU Commission in 2022. However, many member states as well as data protection and civil liberties organizations are strong criticism. So it is not yet complete.

- With the EMFA and AI ActThe EMFA has been formally adopted and is to apply from certain dates. The AI Act is in force, but many obligations apply in stages.

Important: Even if laws have been passed, this does not mean that all requirements apply immediately - there are often transitional periods, exceptions or technical implementations that are still open. In this first part, we have therefore worked out which regulations are in play, what the EU is aiming to achieve with them and where we currently stand. In the next chapter, we will look specifically at the individual major laws - in simple terms, so that it becomes clear: „What exactly is this?“

Current survey on the planned digital EU ID

Chat Control / CSAR - Monitoring private communication

In 2022, the EU Commission's Regulation to Prevent and Combat Child Sexual Abuse (CSAR) presented the proposal that many consider to be „Chat Control“ is called. The core: providers of messenger services, chat platforms or other digital communication services should be obliged to search for, report and delete child sexual abuse content (CSAM).

The idea behind this is understandable: Abusive material online is a serious problem, and national laws alone are not always effective - the EU sees a uniform standard requirement.

But this is where the controversy begins: This is because some versions of the proposal provide for communications to be searched, even if they are end-to-end encrypted. In everyday terms, this means that your private chats, images or videos could be technically analyzed - before the messaging service encrypts or forwards them. Critics speak of mass surveillance.

The technology is sophisticated and risky: How can you really reliably detect misused material without risking misdiagnosis? How can data protection and encryption be guaranteed? A Study shows that false positives can be high, especially with client-side scanning.

In short, the proposal combines a legitimate protection mandate with considerable risks to fundamental rights. For citizens, this means that private communications could be subject to stricter controls in future - even if they have nothing to do with misuse. For small businesses, this means that if you operate messenger channels or customer communication, you would have to be prepared for the possibility of new legal obligations or technical audits.

| Aspect | Description |

|---|---|

| Name | Regulation to Prevent and Combat Child Sexual Abuse (CSAR), colloquially known as „chat control“ |

| Main objective according to the EU | Prevent, report and delete online dissemination of child sexual abuse material (CSAM); establish an EU reporting center. |

| Who is primarily affected? | Providers of communication services (messengers, chats, hosting platforms, cloud services), in some cases also providers of encrypted communication. |

| Central instruments | - (Partial) scanning of content for known abuse patterns - Reporting obligations to authorities/EU center - Mandatory or „voluntary“ monitoring measures depending on the risk classification |

| Current status | - Proposed regulation under negotiation since 2022 - Strong opposition from several member states and civil rights organizations - Compromise texts with „voluntary“ scanning under discussion |

| Arguments of the proponents | - Without uniform EU rules, CSAM cannot be combated effectively - Providers must take responsibility - Technical means are necessary to track down perpetrators |

| Critics' arguments | - Danger of blanket surveillance of all citizens - Weakening of end-to-end encryption („client-side scanning“) - High number of possible false alarms and false suspicions - Creation of a permanent monitoring infrastructure that can be expanded politically |

| Consequences for citizens | - Private communication could be checked (partially) automatically - Uncertainty with sensitive content (photos, documents, chats) - Risk of false suspicions and data storage |

| Consequences for SMEs | - Customer communication via scanned services is no longer completely private - Possible conflicts with data protection and trade secrets - Need for adaptation in the selection and use of communication channels |

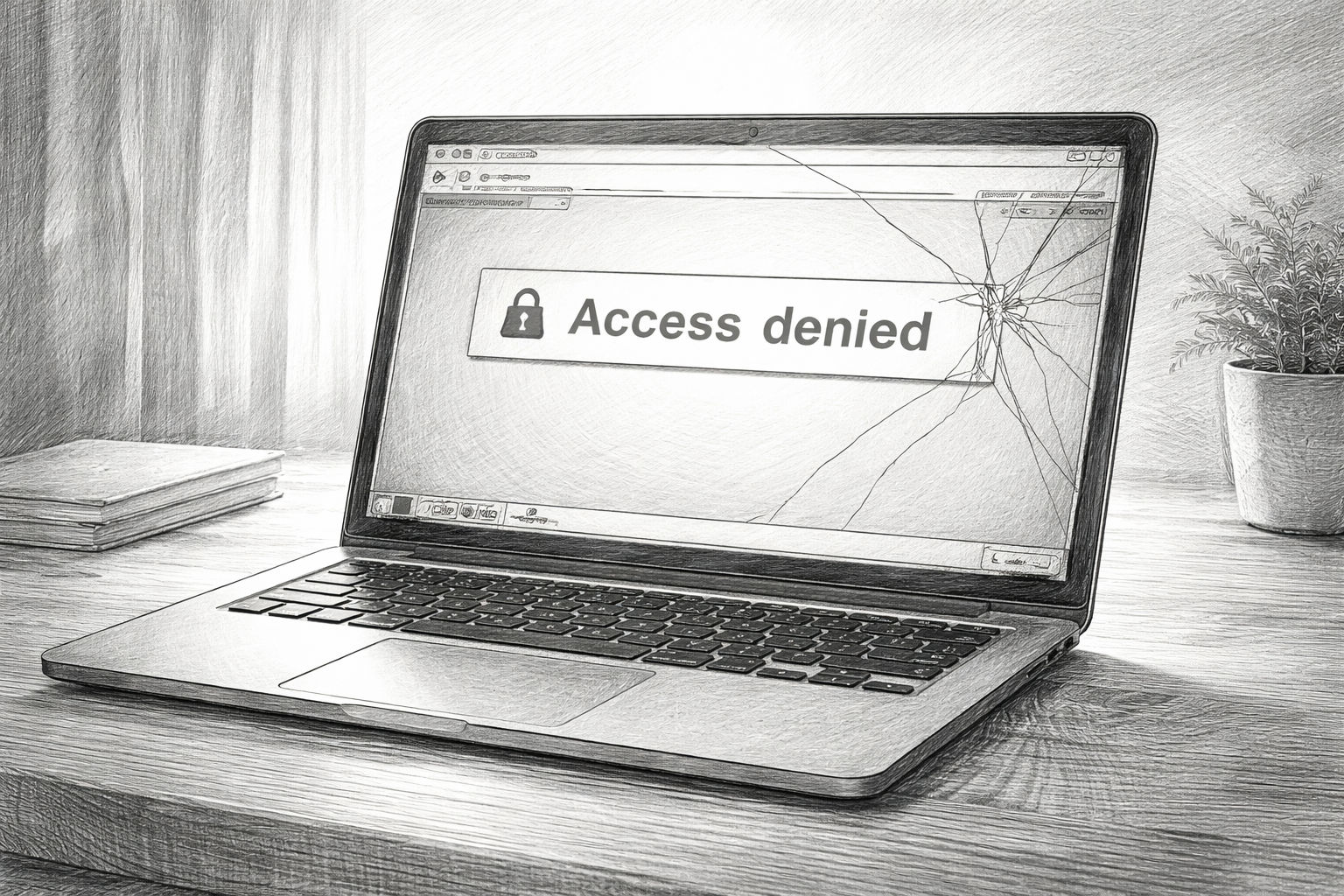

Digital Services Act (DSA) - rules for platforms and their deletion obligations

With the Digital Services Act (DSA) the EU has created a comprehensive set of rules that was already adopted in 2022. It is primarily aimed at digital services - hosting providers, platforms, search engines - with the aim of making the internet safer, more transparent and fairer. The most important obligations include

- Providers must Reporting and remedial systems which users can use to report illegal content (e.g. hate speech, terrorist propaganda, fraud).

- Platforms must respond to this, i.e. Delete content or block access, if they become aware of illegal content.

- For „very large“ platforms (e.g. over 45 million EU users), either stricter requirements apply: Risk analyses, auditing of recommendation algorithms, disclosure of moderation practices.

- Transparency obligationsUsers should better understand why content has been deleted or blocked; platforms must report on this.

For the everyday life of a person, this means that if you post articles on platforms - for example on social networks or forums - platforms will have to make stricter decisions in future. In case of doubt, content could be removed more quickly.

For a small company, this means that if your website, forum or platform has interactive user posts, you need to carefully review the terms of use, reporting procedures and deletion processes. Even if you are not one of the really big platforms, indirect pressure can arise, especially through guidelines on how users should be protected from harmful content.

| Aspect | Description |

|---|---|

| Name | Digital Services Act (DSA) - Regulation (EU) 2022/2065 |

| Main objective according to the EU | - Secure digital space for users - Protection of fundamental rights online - Uniform set of rules for digital services in the EU |

| Who is primarily affected? | - Switching services (access, caching, hosting) - Online platforms and marketplaces - „Very Large Online Platforms“ (VLOPs) and search engines with 45 million EU users or more |

| Central duties | - Reporting channels for illegal content - Obligation to remove quickly after knowledge - Transparency reports on moderation - Risk analyses and audits for very large platforms - Cooperation with „trusted flaggers“ |

| Current status | - In force, already fully effective for large platforms - Commission conducts proceedings against individual providers (e.g. due to inadequate moderation) |

| Arguments of the proponents | - Platforms take responsibility for the risks of their services - More transparency about deletion and recommendation practices - Better protection against hate, fraud, disinformation and illegal offers |

| Critics' arguments | - Danger of „overblocking“ (too much is deleted to avoid penalties) - De facto privatization of censorship: platforms decide what remains visible - Flexible terms such as „systemic risks“; politically sensitive content can be thinned out |

| Consequences for citizens | - Posts can be blocked or made invisible more quickly - More formal ways to challenge decisions - but high costs - Filter bubbles are not necessarily getting smaller, the intervention is rather „from above“ |

| Consequences for SMEs | - Marketing and visibility are even more dependent on the platform algorithm - Content on sensitive topics (health, politics, society) is more likely to be deleted - Separate comment areas require more moderation effort and clear rules |

European Media Freedom Act (EMFA) - the new media law

The European Media Freedom Act (EMFA) formally came into force on May 7, 2024 and will apply in full from August 8, 2025: The EU wants to safeguard the independence and diversity of the media - i.e. publishing houses, broadcasters, online media - while at the same time creating new rules for cooperation between media and platforms. Important content:

- Protection of editorial independence and journalistic sources.

- Transparency about media ownership structures so that it is clear who is behind a medium.

- Regulation of government advertising and how governments can influence media through ads.

A special feature: media service providers should be protected against platforms, for example so that their content is not simply suppressed at will by large platforms.

For the user, this means that theoretically a greater diversity of opinions and media sources could emerge, with clear labeling and better conditions for quality media. However, there will also be a new level of scrutiny - platforms will have to take special care with «recognized media» in future.

For a small company that publishes content (e.g. blog, newsletter or online magazine), this means that you will find yourself in a different order in which your content can be evaluated differently - depending on whether you are considered a „medium“ or not, and how platforms deal with such content.

| Aspect | Description |

|---|---|

| Name | European Media Freedom Act (EMFA) - European media freedom law |

| Main objective according to the EU | - Protection of media pluralism and editorial independence - Limiting political influence on the media - Transparency about ownership structures and state advertising funds |

| Who is primarily affected? | - Media companies (print, broadcasting, online) - Institutions under public law - Platforms in dealing with media content |

| Central instruments | - European body for media services (cooperation of national regulators) - Rules for state advertising and promotion - Special treatment of „recognized media“ by platforms (e.g. privileged treatment for moderation) |

| Current status | - Adopted, entry into force 2024 - Validity of the main provisions from 2025 |

| Arguments of the proponents | - Better protection of reputable media from political pressure - Transparency against covert influence and media concentration - Protection of editorial decisions from interference by owners or states |

| Critics' arguments | - Danger of a „media privilege“ for state-loyal or established providers - Platforms come into conflict between DSA deletion obligations and EMFA privileges - Smaller or alternative media without formal status could be disadvantaged |

| Consequences for citizens | - Visible content could be more strongly influenced by „recognized media“ - Demarcation between established media and independent offerings is becoming sharper - More critical or new voices may have a harder time building reach |

| Consequences for SMEs | - Own content (blogs, magazines, newsletters) competes with privileged media environments - Cooperation with media (advertising, sponsored content) is more strictly regulated - Companies that want to act as a medium themselves must observe additional requirements |

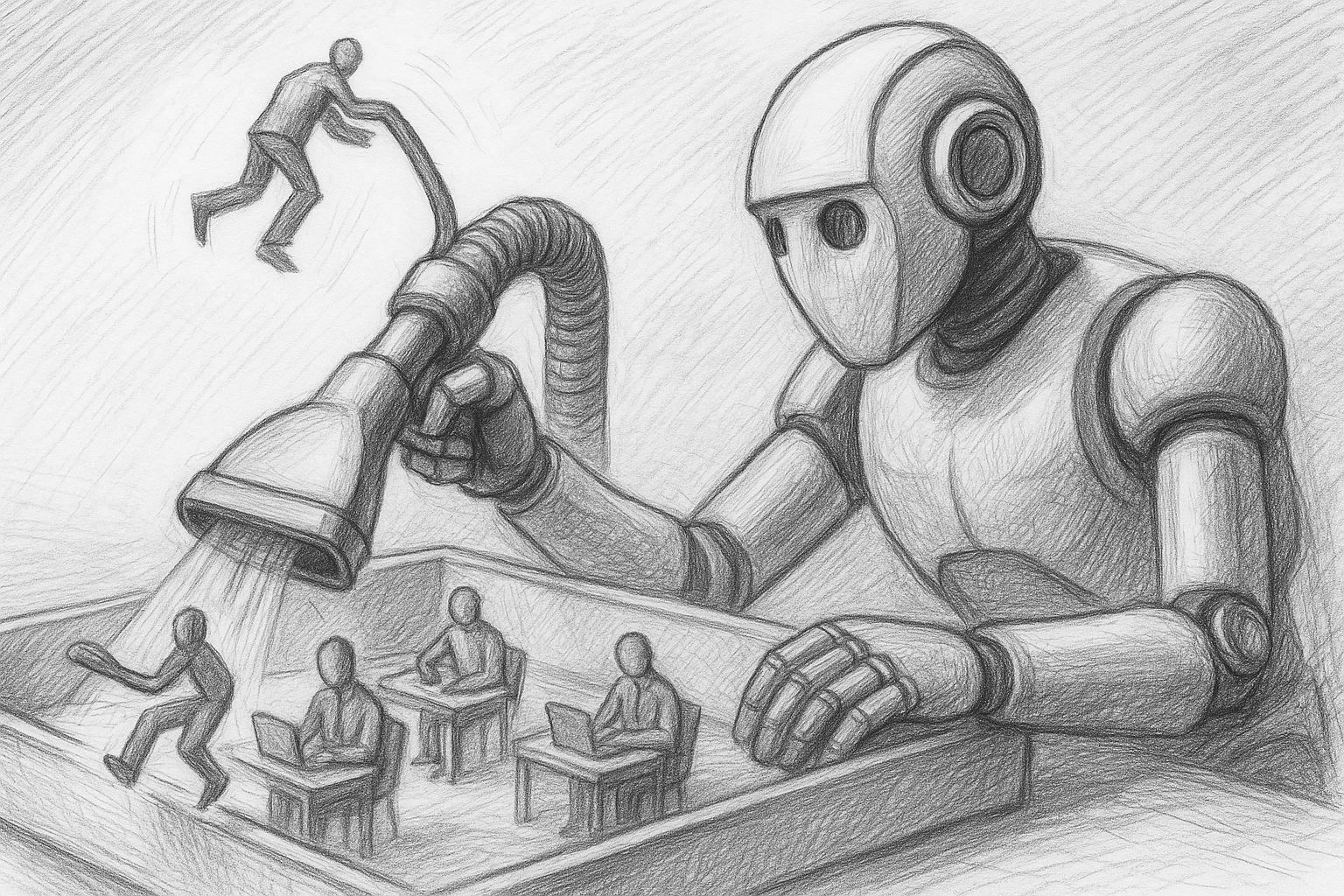

AI Act - rules for artificial intelligence, deepfakes & co.

With the Artificial Intelligence Act (AI Act) the EU has created one of the world's first comprehensive pieces of legislation on AI regulation. It is designed to be technology-neutral, i.e. not only for currently known AI systems, but also for future developments. The core feature is a risk-based approach: the higher the risk to fundamental rights or security, the stricter the obligations. Examples:

- AI systems with unacceptable risk (e.g. social rating systems) may be prohibited.

- High-risk AI (e.g. application or biometric systems) are subject to strict regulations.

- Other AI systems must characterized for example if content has been generated automatically (e.g. deepfakes).

For citizens, this means that if you come across content in the future, such as a video or text that was created automatically, this content may be marked as „AI-generated“ - so that you can recognize who or what is behind it. For companies, this means that if you use AI tools - e.g. in marketing, for document production, customer service - you must check whether your application falls under a risk category, whether labeling requirements apply or whether conditions must be met.

| Aspect | Description |

|---|---|

| Name | Artificial Intelligence Act (AI Act) - EU regulation on the regulation of AI |

| Main objective according to the EU | - Ensure that AI systems are secure and trustworthy - Protection of fundamental rights and consumers - Promoting innovation under clear rules |

| Who is primarily affected? | - Providers and operators of AI systems (from start-ups to large corporations) - Users who integrate AI into products or services - Specifically: manufacturers of „high-risk AI“ |

| Risk categories | - Prohibited AI (e.g. certain forms of social assessment) - High-risk AI (e.g. biometric systems, certain decision support) - AI with transparency obligations (e.g. chatbots, deepfakes) - Minimal risk AI (e.g. simple tools without deep intervention in rights) |

| Central duties | - Risk assessment and documentation - Labeling AI-generated content in certain cases - Quality and data requirements for high-risk AI - Transparency towards users (e.g. „You are talking to an AI“) |

| Current status | - Adopted, comes into force in stages - Individual obligations already apply, others will follow in transitional periods |

| Arguments of the proponents | - Protection against non-transparent, unfair or dangerous AI systems - Building trust: Users know when AI is in play - Minimum standards for quality and data security |

| Critics' arguments | - Complexity and bureaucracy, especially for small providers - Uncertainty as to whether an application is „high risk“ or not - Risk of innovation and AI development shifting to less regulated regions - Inhibition threshold for SMEs to use AI at all |

| Consequences for citizens | - More labeling of AI content (texts, images, videos) - Better protection for automated decisions, but also more formality - More conscious handling of „machine-generated“ information |

| Consequences for SMEs | - Need to document the use of AI (internally and externally) - Possible labeling obligations in marketing or customer contact - Advice may be required to correctly classify risks and obligations - Those who are well prepared can still use AI as a competitive advantage |

A timeline of the most important steps

2022-2023: The phase of major proposals

During this phase, several key proposals were officially introduced: the Digital Services Act (DSA), the Regulation to Prevent and Combat Child Sexual Abuse (CSAR, also known as „Chat Control“) and the European Media Freedom Act (EMFA).

- In the May 2022 the EU Commission presented the CSAR proposal to combat online depictions of child sexual abuse.

- On April 23, 2022 a political agreement was reached between Parliament and the Council on the DSA initiative.

- In the June/December 2023 negotiations and agreements were reached on the EMFA draft.

European Council

The playing field was therefore staked out at this stage: the EU decided that more comprehensive digital rules were needed.

2024: The first laws come into force

The implementation phase began in 2024: some regulations have been adopted, others are being prepared for application.

- The DSA formally came into force (Regulation (EU) 2022/2065), and many services had to prepare for compliance.

- The EMFA was formally adopted (e.g. March 26, 2024 by the Council) and the implementation targets were set.

- At the CSAR However, the situation remains uncertain: the time until a final decision is made has been extended several times. For example, the interim measure was extended until April 3, 2026.

This phase was characterized by transition periods, preparations and political disputes - the clock is ticking, but not everything is active yet.

2025: The decisive phase

Important decisions will be made in 2025.

- The DSA duties for very large platforms („VLOPs“) are increasingly taking effect; deadlines are expiring.

- The EMFA is fully applicable - many provisions are to apply from a cut-off date.

- At the CSAR a decision is on the horizon: Some member states have announced resistance, votes have been postponed or blocked.

For citizens and businesses, it is becoming more tangible: no longer just „draft legislation“, but „regulation to which one must adapt“.

2026 and later: what is foreseeable to come

The following steps are also relevant beyond 2025. Additional technical standards may still apply to the DSA: the EU Commission is monitoring implementation and impact. A final regulation may follow for the CSAR - or the proposal may be significantly amended or postponed. Further special provisions will apply to the EMFA, for example on media platform cooperation, interfaces or advertising.

Generally speaking, the more complex the digital infrastructure becomes (AI, platforms, communication), the more regulation is expected to be required. For the reader, this means that the topic is not closed - it will become part of our everyday digital lives. Preparation and attention are therefore advisable.

FREEDOM OF OPINION AT RISK: EU Digital Services Act | WELT news channel

What does this mean for small and medium-sized enterprises?

Corporations such as Meta, Google and Microsoft have their own legal departments, data protection teams and compliance officers. Small and medium-sized enterprises (SMEs) generally do not. Nevertheless, the new EU regulations also affect them - sometimes directly, often indirectly via the platforms, tools and communication channels used.

The following section looks at what these developments can mean in concrete terms for smaller companies: from one-man businesses to SMEs with 50 or 200 employees.

More rules in the background - even if you don't see them immediately

Many of the new rules - for example in the Digital Services Act (DSA) or the AI Act - are officially aimed primarily at „very large“ platforms or certain AI providers. Nevertheless, the consequences are having an impact on smaller companies:

- about the platforms on which they advertise or publish content,

- about tools they use (e.g. AI systems, newsletter software, chat systems),

- and about communicative obligations that are gradually arising for smaller providers as well.

Example: The DSA obliges online platforms to efficiently remove illegal content and create transparency. For small companies that reach customers via Facebook, Instagram, YouTube or platform marketplaces, this means that content can be deleted or restricted more quickly than before - even if they themselves comply with the law.

Concrete effects in the everyday life of an SME

1. comment columns, forums and customer ratings

Many companies today use:

- Blog article with comment section

- Forums or support communities

- Rating functions or guest books

- Social media channels with comments

The new rules increase the pressure to keep an eye on user content:

- Complaints must be taken seriously and examined.

- Obviously illegal contents should be removed quickly to avoid liability issues.

- Platforms themselves react more sensitively: a shitstorm with borderline content can lead more quickly to bans, loss of reach or blockades.

- Supporters sayThis makes sense because it creates clear responsibilities. Anyone who offers a public platform (even a small forum) must prevent abuse. For reputable providers, this is not a disadvantage, but a sign of quality.

- Critics argue against thisSmall companies have neither the time nor the staff to legally evaluate comments or prepare them for moderation. They are afraid of switching off comment functions completely for fear of problems - which further impoverishes open exchange on the Internet.

2. communication channels: E-mail, Messenger and „Chatcontrol“

The planned regulation to combat child abuse (CSAR, „Chatcontrol“) is formally aimed at providers of communication services who are supposed to search for and report abuse material. For traditional SMEs, this does not mean that they have to set up their own scanners 1TP12 immediately. But:

- Many use messenger services (WhatsApp, Signal, Teams, Slack, etc.) both internally and in customer communication.

- If these services are to be Scan contents (or have to scan), corporate communications are potentially no longer as confidential as they used to be.

- Particularly delicateFiles, project documents, drafts, confidential information that passes through such channels.

- Proponents argueWithout such measures, it would hardly be possible to combat child abuse online. Companies that work cleanly have nothing to fear - on the contrary, they benefit from a safer digital environment.

- Critics counterThe technical implementation (e.g. „client-side scanning“) can practically undermine encryption and create an infrastructure that could be used to check any type of content - abuse today, possibly political or economic issues tomorrow. Business secrets and customer data are at risk because additional interfaces are created through which data can be leaked or misused. False alarms (false suspicions) can be damaging to the reputation of companies and employees, even if nothing has actually been done wrong.

3 AI in the company: Labeling, documentation and liability

With the AI Act, the EU is introducing a risk-based framework: Different obligations apply depending on the area of application. Three areas are particularly relevant for SMEs:

- Marketing and communicationIf texts, images or videos are created with AI - such as blog articles, social media posts or product images - labeling obligations may apply (e.g. „This content was created with the support of AI“), especially when it comes to politically sensitive areas or deepfakes.

- Customer service and decision-making processesChatbots or automated decision-making systems (scoring, applicant selection, risk assessment) can fall into the „high-risk AI“ category, depending on their characteristics, with strict documentation and transparency obligations.

- Internal tools and analysesEven if external providers integrate AI functions (e.g. CRM systems, accounting, ERP), the company can be held responsible if it makes decisions based on AI recommendations.

- Supporters sayThe AI Act protects customers and citizens from opaque or unfair decisions. It forces providers to exercise care, quality and transparency - which ultimately also benefits reputable SMEs that use AI responsibly.

- Critics point outFor smaller companies, the regulations quickly become confusing. They often do not know whether their use of AI already falls within the regulated area or not. There is a risk that SMEs will prefer not to approach AI at all due to uncertainty - and thus accept an innovation disadvantage compared to larger companies. In practice, documentation and labelling obligations could become a bureaucratic monster that is almost impossible to keep track of without specialist advice.

4. reputation risks: visibility, blocking and „algorithm pressure“

The DSA puts platforms under greater pressure: they have to show that they are seriously combating risks such as disinformation, hate speech or legally problematic content. For companies, this means

- Content that touches on controversial topics (health, politics, security, crises, society) can be downgraded more quickly - i.e. receive less visibility.

- Accounts can be temporarily locked, contributions removed or advertising campaigns blocked when platforms see „risks“ - even if the content is not clearly illegal.

- The boundary between legitimate criticism and „problematic content“ thus becomes more blurred - and in practice is in the hands of the respective platform moderation.

- Proponents arguePlatforms must take responsibility. Without effective mechanisms, misinformation and hate speech would remain unchecked. Companies that provide reputable content are more likely to gain something in the long term: a cleaner environment and more trust.

- Critics, however, seen: A strong tendency towards overblocking: For fear of fines, platforms prefer to delete too much rather than too little.

This can affect smaller companies in particular, which are dependent on visibility but have no lobby to challenge decisions.

The „algorithm pressure“ leads to content being adapted to supposed guidelines so as not to attract negative attention - which is tantamount to creeping self-censorship.

Current survey on the planned digital euro

Who decides what is „information“ and what is „disinformation“?

When you look at the new EU regulations, one word always stands out: Disinformation. It is to be combated - whether through platform obligations, risk assessments or algorithmic interventions. But the crucial point is rarely made: Who defines what disinformation is in the first place?

This question is central, because it determines whether protective measures really protect - or whether they unintentionally lead to the control of public debates. In theory, it seems simple:

- False claims = Disinformation,

- correct statements = Information.

In practice, however, the world is rarely so clear. Many topics are complex, fuzzy, controversial or constantly evolving. Studies change, experts argue, new facts emerge. What is considered wrong one day can suddenly be reassessed the next - just think of political forecasts, health debates or new technological risks.

This is why many critics warn against treating „disinformation“ as a fixed term. They emphasize that it is often less a matter of clear facts, but rather an interpretation that is shaped by the respective political or social situation.

Platforms make practical decisions - but under pressure

Formally, it is platforms that have to decide whether something is potentially disinformation because they are held responsible for risks under the Digital Services Act. But platforms do not act in a vacuum. They are under several types of pressure:

- Legal pressureHigh fines if „risks“ are not sufficiently reduced.

- Political pressureExpectation to give more or less weight to certain narratives.

- Public pressureCampaigns, outrage, media reports that influence moderation decisions.

- Financial pressureIndependent moderation costs time, money and personnel - automated solutions are cheaper, but often more error-prone.

Under these conditions, platforms tend to delete or restrict too much rather than too little when in doubt. This means that they de facto decide what remains visible - even if they are not the actual legislator.

The role of governments and authorities

Another point is that governments or state agencies are becoming increasingly involved in these processes - for example in the form of:

- „Trusted Flaggers“

- Reporting offices

- Advice centers for platforms

- Assessments of „systemic risks“

This creates a system in which state institutions can indirectly influence which content is classified as problematic. This does not necessarily have to be abusive - but it creates a structure that is susceptible to abuse if other political majorities later prevail.

The danger of a „shift in what can be said“

When platforms sort content on the basis of vague terms such as „manipulation“, „social risks“ or „disinformation“, a cultural shift occurs in the long term:

- People are expressing themselves more cautiously, companies are avoiding certain topics, critical voices are losing visibility.

- The result is not an open ban - but a creeping invisibility. Content does not disappear, but it hardly reaches anyone.

Many critics do not see this as targeted censorship, but rather a side effect that is just as effective: what can be said is shifting, simply because every actor has to learn what is „desirable“ or „incriminating“ from the platforms.

Anyone who talks about disinformation must also talk about power.

Today, the decision about what is true, false, permissible or dangerous no longer lies solely with science, journalism or democratic debate, but increasingly with a mixture of the two:

- Platforms

- Algorithms

- Government agencies

- Moderation teams

- external auditors

- and social pressure

This is precisely why it is important to look at this development not only in technical or legal terms, but also culturally. After all, it is ultimately about something very fundamental: how freely can a society speak if no one can clearly say who decides what is true and false - and by what standards?

EU law censors the Internet (DSA - Digital Services Act) | Prof. Dr. Christian Rieck

Proponents vs. critics - the confrontation

To make the tensions clear, it is worth making a direct comparison. What supporters emphasize:

- Safety and protectionChildren, consumers, democratic processes - all of these need a modern legal protective shield.

- Legal clarity: Uniform rules in the EU spare companies the patchwork of special national approaches. Those who adhere to clear guidelines have less legal uncertainty.

- FairnessLarge platforms and AI providers should not be „free riders“ who rake in profits and offload risks onto society. Regulations such as the DSA and AI Act would create order here.

- Trust: In the longer term, trust in digital services should increase if users know that rules apply and are monitored.

What critics emphasize:

- Risks under fundamental rightsChatcontrol and similar measures create a technical infrastructure that could be used to monitor all digital communication in the future - even beyond the original purpose.

- Bureaucratic overloadSmall companies have neither the resources nor the know-how to meet the growing list of obligations (labeling, documentation, moderation, reporting channels) in a clean and sustainable manner.

- Privatized censorshipHigh fines turn platforms into „deputy sheriffs“ who, in case of doubt, delete more than necessary - at the expense of freedom of expression and the diversity of the debate. SMEs that thrive on reach can lose out without warning.

- Obstacle to innovationInstead of daring to experiment, many small companies slow themselves down because they are afraid of doing something wrong. If you don't take risks, you don't break the rules - but you stay on the spot.

- Power shiftIn the end, those who can best operate the rules will prevail - large corporations with legal departments and compliance teams. Small companies have to play by their rules because they are dependent on their infrastructure.

Conclusion from the perspective of a small or medium-sized company

The most important thing for a normal SME:

- Know the basics without getting lost in every detail.

- Check your own communication channels (comments, social media, newsletters, messenger) and consciously decide what you really need - and where you consciously reduce.

- Document the use of AI and, if in doubt, communicate openly rather than having to explain later.

- Stay calm, but be attentive: there is no point in doing nothing because of all the laws. It is important to think step by step: „Where does this really affect me?“ - and to find solid solutions there.

The critics' main concern can be summarized in one sentence: It is not the individual law that is the problem, but the interaction of many rules that, piece by piece, creates a digital environment in which control and conformity are rewarded - and independent, critical voices have a harder time.

Data sovereignty as the basis for regulatory security

The increasing requirements of EU regulations such as the AI Act or the Digital Services Act present product managers with a clear task: systems must not only function, but also be traceable and controllable at all times. This is precisely where the advantage of a dedicated, centrally controlled software structure comes into play. If you have your data, processes and interfaces under control, you can not only meet new requirements, but actively shape them. One locally operated ERP solution like gFM Business enables precisely this control: data is stored in your own system, processes are documented transparently and adjustments can be implemented in a targeted manner - without dependence on external platforms. This creates a stable basis for clearly mapping regulatory requirements, be it for documentation obligations, access controls or the traceability of decisions. For product managers, this means one thing above all: less uncertainty, clearer processes and the certainty that their own system will remain reliable even under increasing requirements.

The increasing requirements of EU regulations such as the AI Act or the Digital Services Act present product managers with a clear task: systems must not only function, but also be traceable and controllable at all times. This is precisely where the advantage of a dedicated, centrally controlled software structure comes into play. If you have your data, processes and interfaces under control, you can not only meet new requirements, but actively shape them. One locally operated ERP solution like gFM Business enables precisely this control: data is stored in your own system, processes are documented transparently and adjustments can be implemented in a targeted manner - without dependence on external platforms. This creates a stable basis for clearly mapping regulatory requirements, be it for documentation obligations, access controls or the traceability of decisions. For product managers, this means one thing above all: less uncertainty, clearer processes and the certainty that their own system will remain reliable even under increasing requirements.

What remains for ordinary citizens and small businesses?

If you look at all these developments, it becomes clear that the EU is trying to bring order to a digital world that has grown largely unregulated over many years. The goals sound understandable on paper - protection, security, transparency. At the same time, however, a dense network of regulations, testing mechanisms and technical requirements is slowly but noticeably enveloping our everyday digital lives.

For large corporations, this is a question of resources. They hire lawyers, adapt their processes and invest in new tools. For ordinary citizens and small or medium-sized companies, on the other hand, this development often feels confusing, sometimes even threatening - not because they have something to hide, but because they suspect that the nature of digital communication is changing.

What ordinary citizens should bear in mind

- Stay alert without driving yourself crazy. We don't need to panic, but we do need a healthy awareness that communication in the digital space is increasingly being monitored and filtered - not necessarily by people, but by systems, algorithms and automated checks.

- Maintain your own information channels. The more content is filtered and sorted, the more important it becomes to consciously use different sources of information. Not just social networks - but also traditional media, specialist portals and independent newsletters.

- Communicate consciously. You don't have to write every thought publicly. But it helps to realize that platforms are more likely to delete than to leave. That doesn't mean belittling yourself - but you should understand how the mechanisms work.

- Don't trust every AI - But don't be afraid of it either. If content is labeled as AI-generated in the future, this can help people not to take everything at face value. At the same time, we should not be deterred from using AI tools sensibly - with a sense of proportion.

What small and medium-sized enterprises should consider

- Consciously structure communication and platform presence. Comment sections, support forums, social media channels - all of these can be useful, but should be well maintained. The new regulation forces companies to take responsibility, but this can be handled professionally.

- Documenting AI usage. Not every tool is problematic, but companies should know where AI is being used - and communicate it openly when in doubt. This creates trust and prevents misunderstandings.

- Create clear internal rules. What content is published? Who checks complaints? Which platforms do you really use actively? A clear structure takes away a lot of uncertainty.

- Don't lose your own way. Small companies in particular thrive on their personality, their signature style and their direct customer relationships. No law forces them to lose this. On the contrary - in a world full of automated processes, genuine humanity almost seems like a competitive advantage these days.

Own content instead of external interventions: Why having your own magazine is strategically crucial

The current developments surrounding chat control, the Digital Services Act and the AI Act clearly show where the journey is heading: platforms and digital services are increasingly being regulated, monitored and held accountable. Large providers have to check content, assess risks and in some cases even implement content control measures. At the same time, there are proposals to analyze communication more closely or to capture content automatically, further intensifying the debate about privacy and control. In this environment, one point is often underestimated: Anyone who publishes their content exclusively via third-party platforms is inevitably subject to these rules and their interpretation. Our own magazine In contrast, the new website follows a proven principle: content, structure and publication remain in our own hands. This not only creates independence, but also long-term stability. Especially in times of increasing regulation, this is not a luxury, but a strategic decision - towards more control, clear responsibilities and a platform that is not controlled from the outside.

The current developments surrounding chat control, the Digital Services Act and the AI Act clearly show where the journey is heading: platforms and digital services are increasingly being regulated, monitored and held accountable. Large providers have to check content, assess risks and in some cases even implement content control measures. At the same time, there are proposals to analyze communication more closely or to capture content automatically, further intensifying the debate about privacy and control. In this environment, one point is often underestimated: Anyone who publishes their content exclusively via third-party platforms is inevitably subject to these rules and their interpretation. Our own magazine In contrast, the new website follows a proven principle: content, structure and publication remain in our own hands. This not only creates independence, but also long-term stability. Especially in times of increasing regulation, this is not a luxury, but a strategic decision - towards more control, clear responsibilities and a platform that is not controlled from the outside.

Unclear effect in an emergency: What happens in the event of voltage?

So far, it remains completely unclear how the entire package of EU censorship laws - from the Digital Services Act to extended control powers - would play out in an actual case of tension. So far, there is no reliable experience of how the multitude of provisions will interact in practice if national governments suddenly justify far-reaching interventions in communication and information freedoms with reference to external threats or hybrid attacks.

It is currently not clear to either citizens or companies which restrictions could take effect in an emergency, which mechanisms exist to defend themselves - or whether individual measures would even automatically come into force in a state of emergency.

An in-depth look at possible scenarios in a domestic state of emergency can be found in the article on the state tension in Germany - a perspective that has so far been insufficiently highlighted in the current political discourse.

One last thought

The digital future will be characterized by rules. This is unavoidable - and necessary in many areas. But the decisive factor is how we deal with them as citizens and entrepreneurs. Those who stay informed, communicate consciously and are not intimidated by complicated terms will retain their freedom - even in a more heavily regulated environment.

Perhaps that is the real message: don't blindly trust, don't blindly reject. Instead, understand things, classify them - and then decide for yourself which path you want to take.

Frequently asked questions

- What exactly does the EU want to achieve with these new digital laws?

The EU wants to make the internet safer, combat abuse, curb the spread of illegal content and increase transparency about media and digital services. The idea behind this is that the current legal framework is no longer sufficient because digital services now have a huge impact on society and democracy. The objectives seem understandable, but the means planned to achieve them are far-reaching and sometimes controversial. - Why do some people talk about „censorship laws“, even though the EU denies this?

The term arises from the concern that high fines and unclear definitions („systemic risks“, „disinformation“) will cause platforms to delete content as a precautionary measure. This effectively creates a privatized form of censorship, even if it is not officially referred to as such. Critics see the problem not so much in the legal text itself, but in the way platforms react to it. - What makes Chatcontrol or CSAR so controversial?

Because this law potentially allows private communications to be scanned automatically. In technical terms, this often means „client-side scanning“, where content is checked before it is encrypted. Critics say that this creates an infrastructure that could basically monitor any communication - regardless of whether you are doing something illegal or not. - Has Chatcontrol already been decided?

No. There is massive resistance, especially from Germany and other EU countries. The draft is politically deadlocked and parts of it have been revised several times. But it is not off the table either - it continues to hover over the negotiations. - What does the Digital Services Act (DSA) mean for ordinary people?

Contributions, comments or posts on large platforms can be removed more quickly if platforms classify something as potentially illegal or risky. Users must be prepared for the algorithmic „filter“ to become tighter. At the same time, there are more transparency rights - it is easier to find out why a post has been blocked. - How does the DSA affect small and medium-sized enterprises?

Companies that use social media, allow blog comments or use online store platforms are indirectly affected. Their content can be subject to restrictions more easily - and they have to pay more attention to their own moderation. Errors in comment columns or forums can lead to legal problems. - What does the new Media Freedom Act (EMFA) regulate?

It is intended to protect the independence and diversity of the media. This also means that platforms are not allowed to downgrade or block recognized media without good reason. However, critics fear that this will create a kind of media hierarchy in which established media are favored and alternative voices are disadvantaged. - Can a company lose „privileged“ visibility through the EMFA?

Yes - not directly, but indirectly. Content from small blogs or company websites competes with „recognized media“ whose visibility is algorithmically protected. This can mean that company content appears less prominently on platforms because platforms have to apply more careful moderation strategies for media. - What does the AI Act change for citizens?

In future, a lot of AI content - images, texts, videos - will have to be labeled as AI-generated. This is intended to create transparency so that people can recognize whether a human or an algorithm is the author. This is particularly important for political topics or topics that are susceptible to manipulation. - And what does the AI Act mean for companies?

Companies must check whether and how they use AI: in marketing, customer service or internally. Depending on the risk level, they must document, mark or explain how AI is used. This can mean additional work, but also protects against legal problems in the long term. - Why is the combination of all these laws so critical?

Because each law has its own function, but it is only when they work together that they have a greater impact: surveillance of private communications (CSAR), greater platform control (DSA), media hierarchies (EMFA) and AI labeling requirements (AI Act). Critics say that this creates an environment in which control, conformity and caution dominate - with consequences for debate, creativity and freedom. - What do experts advise ordinary citizens to do when dealing with these changes?

Stay calm, but attentive. Maintain your own information channels, don't leave everything to a single platform and consciously handle private or sensitive content. It is important to understand that digital communication is no longer moderated „neutrally“, but is increasingly controlled by rules. - How can citizens defend themselves against wrong decisions made by platforms?

The DSA gives you new rights: you can challenge moderation decisions, submit complaints and demand a justification. This is progress - but requires time, perseverance and sometimes legal support. - What specific preparations should small companies make in the coming years?

They should create clear internal rules for communication, better control comment areas, document AI usage and check which platforms they really need. It is worthwhile not making everything public that can be regulated internally. - Who are the serious critics of these laws?

Patrick Breyer (European Parliament), European Digital Rights (EDRi), the messenger app Signal, IT security researchers and numerous media law experts. Their criticism is based less on ideology than on technical and constitutional concerns. - Are all these laws ultimately about censorship?

It depends on who you ask. Supporters say it's about security and order. Critics say: even if „censorship“ is not in the law, obligations, penalties and risk aversion create a de facto restriction of visibility and freedom. The truth is probably somewhere in between - but the trend is clear: communication spaces are becoming more regulated. - Are these new rules dangerous for democracy?

Not necessarily per se - but they can become so if they are implemented incorrectly or later politically expanded. Democracy thrives on open debate, criticism and diversity. If platforms delete too much out of fear or if communication channels are monitored, this can weaken democratic culture. - How should a company or citizen react in the long term?

The best way is with a mixture of informed composure and practical preparation. You shouldn't be intimidated, but you shouldn't be naïve either. If you choose your communication channels wisely, create transparency and protect your own data, you will remain capable of acting - even in an environment that is becoming more regulated.